The Core of our Media Servers

Backstage is our high-performance media server software for real-time video playback, GPU-accelerated pixel processing, and multi-output mapping, engineered for show-critical applications. It enables real-time multi-layer compositing and simultaneous multi-surface output mapping across displays, LED walls, and projection systems, including edge-blended multi-projector setups, adaptable to arbitrary screen geometries and complex output topologies. Outputs can be configured as straight GPU feeds, rectangular splits, or custom pixel-mapped layouts to match demanding LED and projection pipelines.

Backstage operates with a future-proof 8 / 10 / 12-bit processing pipeline, optimized for uncompressed image sequences to deliver uncompromising image quality and high-bandwidth performance. It also supports selected modern codecs such as NotchLC, HAP, H.264/H.265/H.266, AV1, VP9, and MPEG2, plus common encryption (CENC) for protected media workflows. Supported sources include still images, multichannel audio, Notch Blocks, HTML5, NDI (HQ/HX), Spout/Unity textures, and capture input through dedicated hardware.

For synchronized multi-server deployments, Backstage provides frame-accurate playback synchronization via PTP v2 (self-generated or external) and supports LTC timecode input/output through audio or dedicated hardware. The render architecture supports multiple output refresh rates via render groups and can align GPU output timing to the media frame rate, with optional frame blending for frame rate conversion.

Color management is handled per media and per output, supporting SDR and HDR pipelines through configurable color primaries (BT.601, BT.709, BT.2020, DCI-P3, AdobeRGB, custom RGBW) and transfer functions (Gamma, sRGB, ST.2084/PQ, HLG, Log, Linear). Backstage provides passthrough when compatible and conversion when required to ensure consistent reproduction across heterogeneous systems.

For show control and integration, Backstage includes a node-based visual programming environment interfacing with internal playback/render components (sequencer, assets, mappings, surfaces, mixer, outputs) and external systems via UDP, TCP, ArtNet, and sACN. It supports headless operation with UI streaming to Windows and macOS clients, enabling multi-user access and custom operator control panels.

The output subsystem supports GPU display pipelines, NDI, ArtNet, shared memory/texture exchange, audio routing, and recording to DPX, SBSM, H.264, and AV1. Pixel processing includes real-time keying (color/chroma) and advanced blend/compositing modes.

Backstage also supports stereoscopic workflows via stereo containers and multiple 3D formats including side-by-side, top/bottom, interleaved, passive/active 3D, and whiteline code.

Basic Workflow

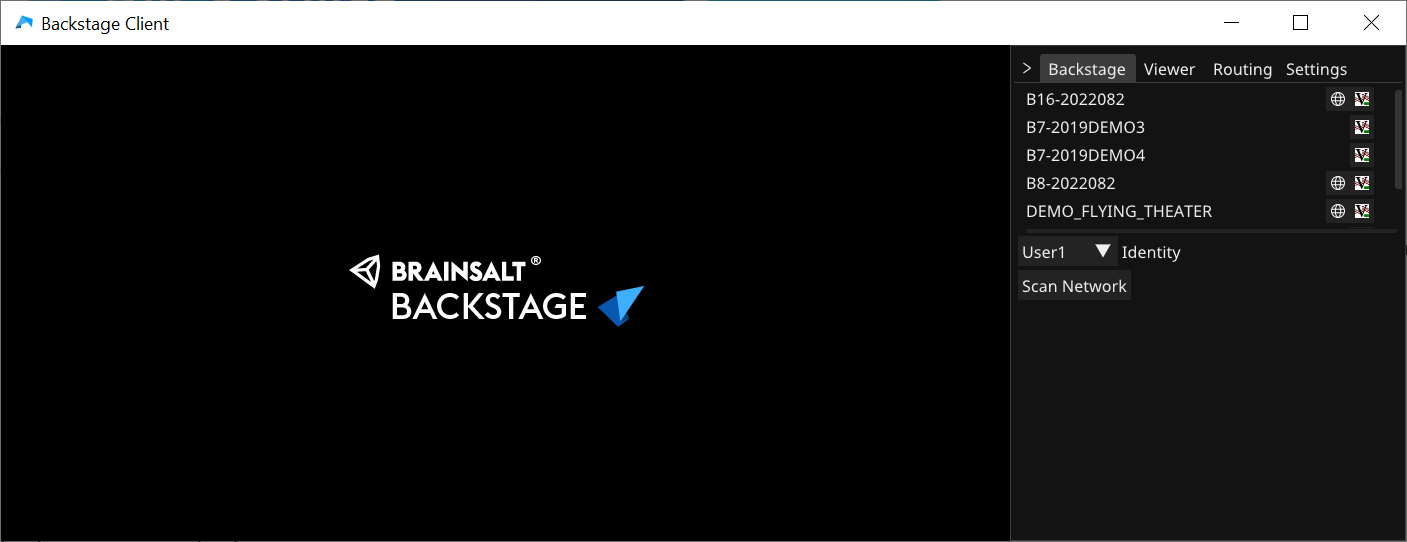

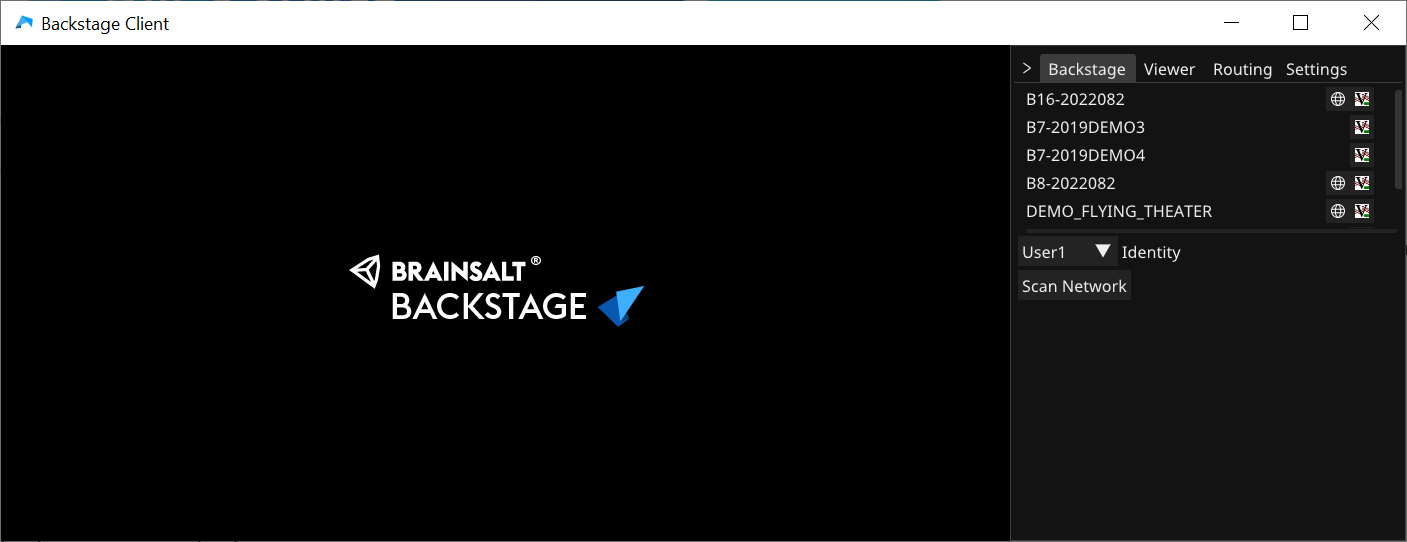

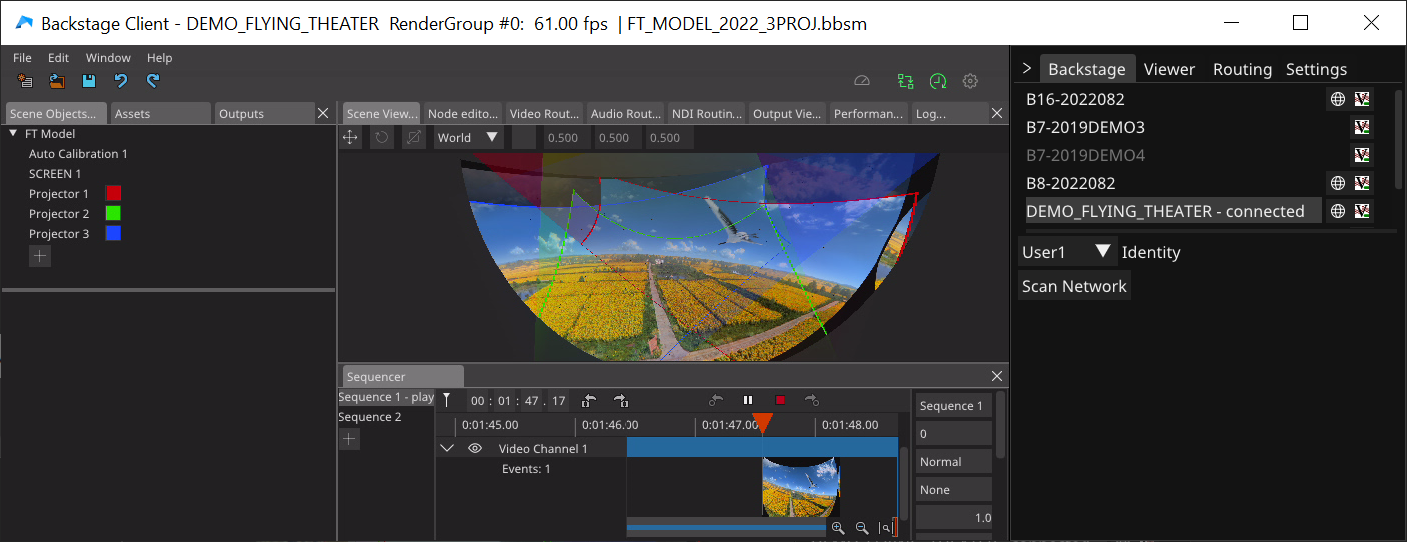

Backstage is streaming its GUI to the network to the Backstage Client - a tool that comes with the server and that is available for PC and MAC. It is fully multi-user compatible: User1 can work on setting up warping while User2 is doing logic programming and User3 is modifying media sequences. After startup, the client will show all available servers in the network.

By double clicking on one of the servers, the GUI for the selected identity will be show.

The GUI consists of flexible arrangeable and dockable windows, the layout is 100% adjustable. Presets can be saved and recalled individually for each user.

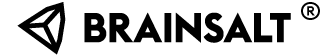

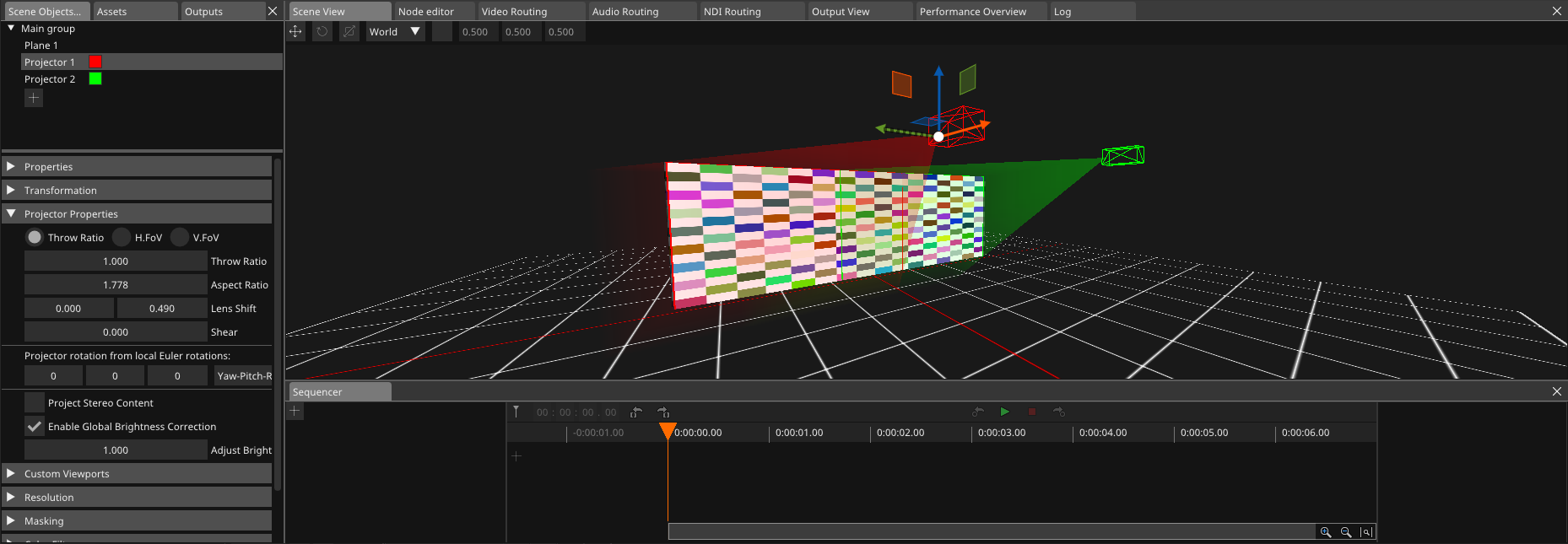

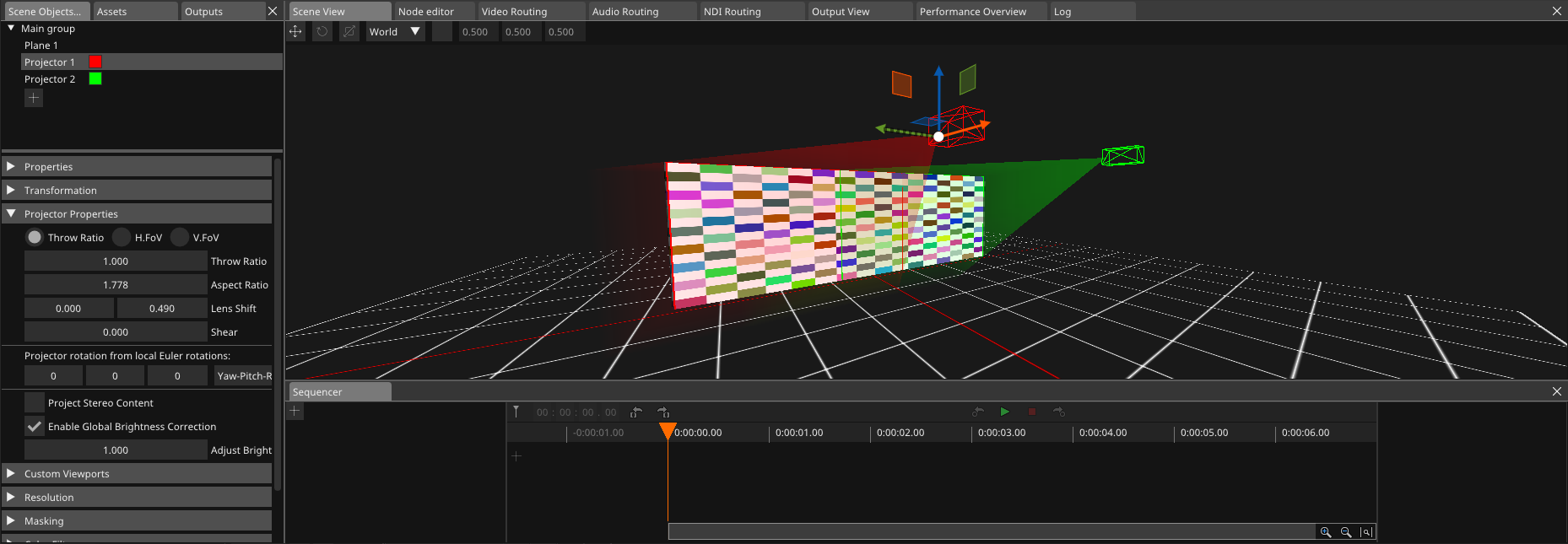

It all starts with a 3D scene

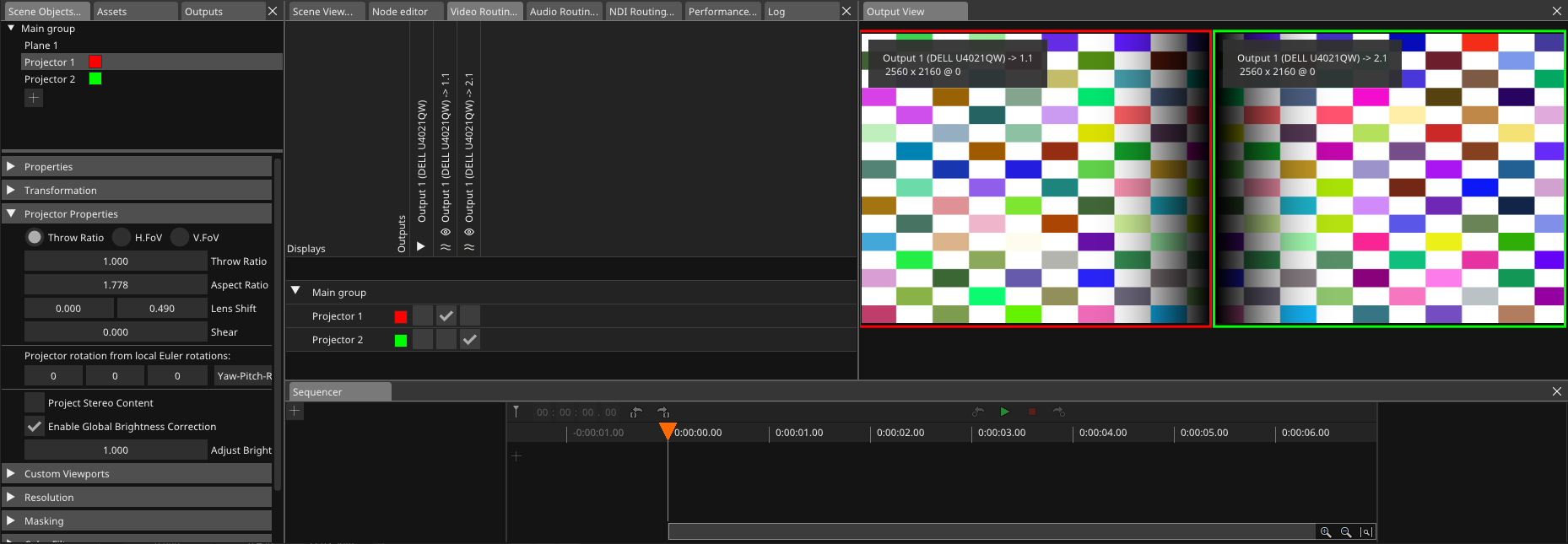

Backstage allows you to build your "real world" environment in its powerful 3D editor. It can be as simple as just adding a display or any super complex multi-channel (3D) projection on any screen surface. This sample scene shows a panorama projection with 2 projectors.

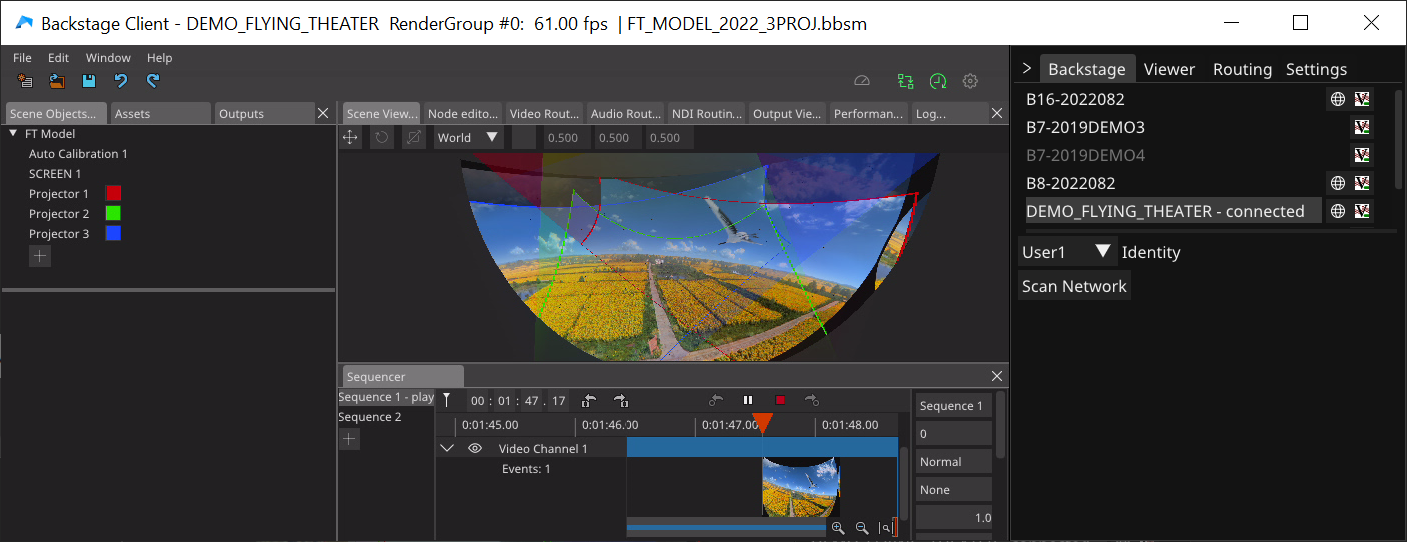

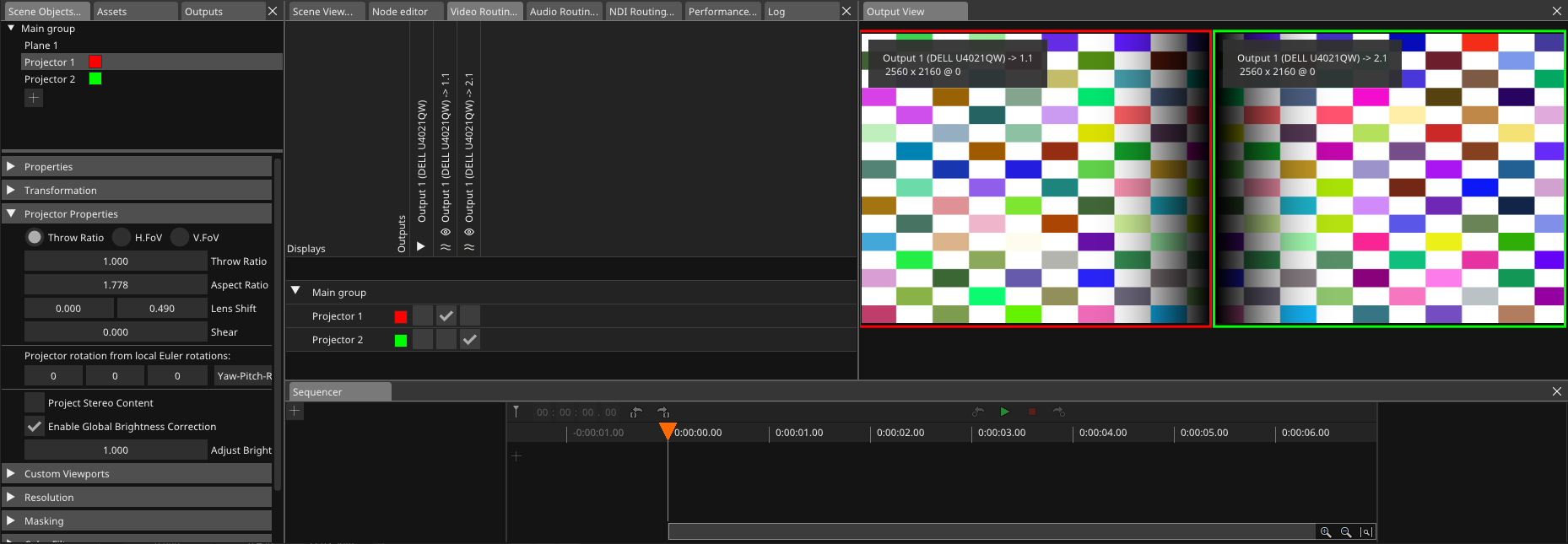

Connect Outputs

To get the projector view to a GPU output, simply route the projector in the Video Routing window. The Output View will show you the actual image sent to the output.

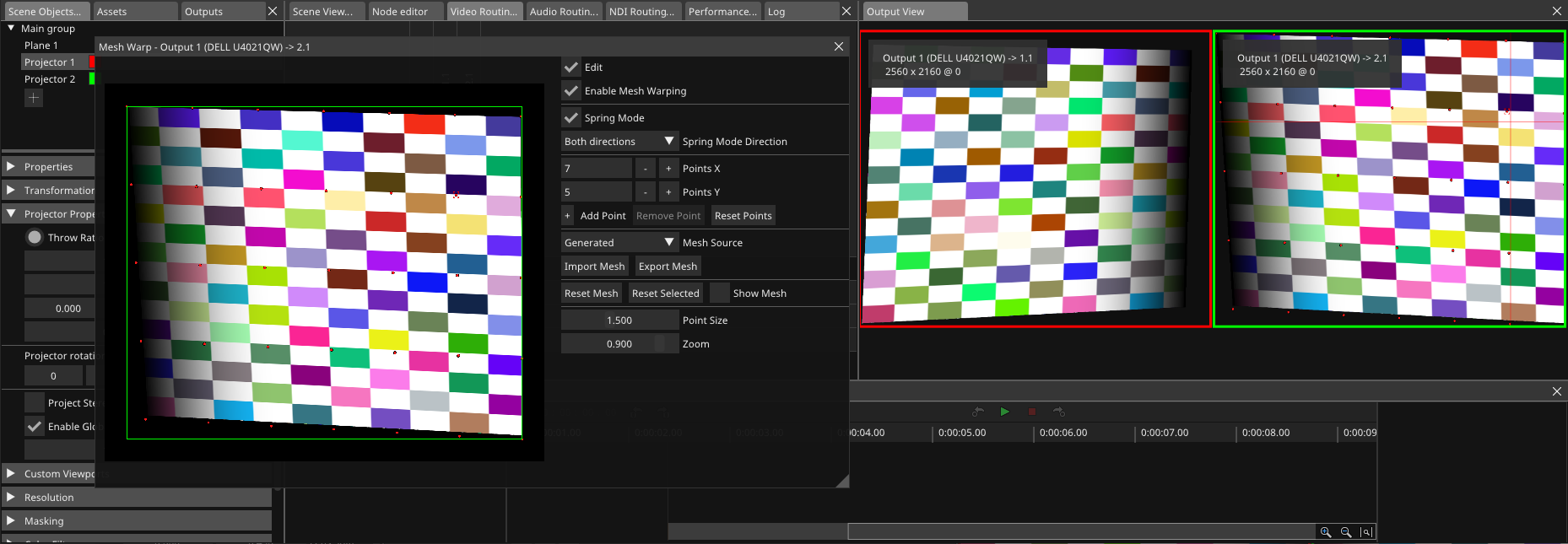

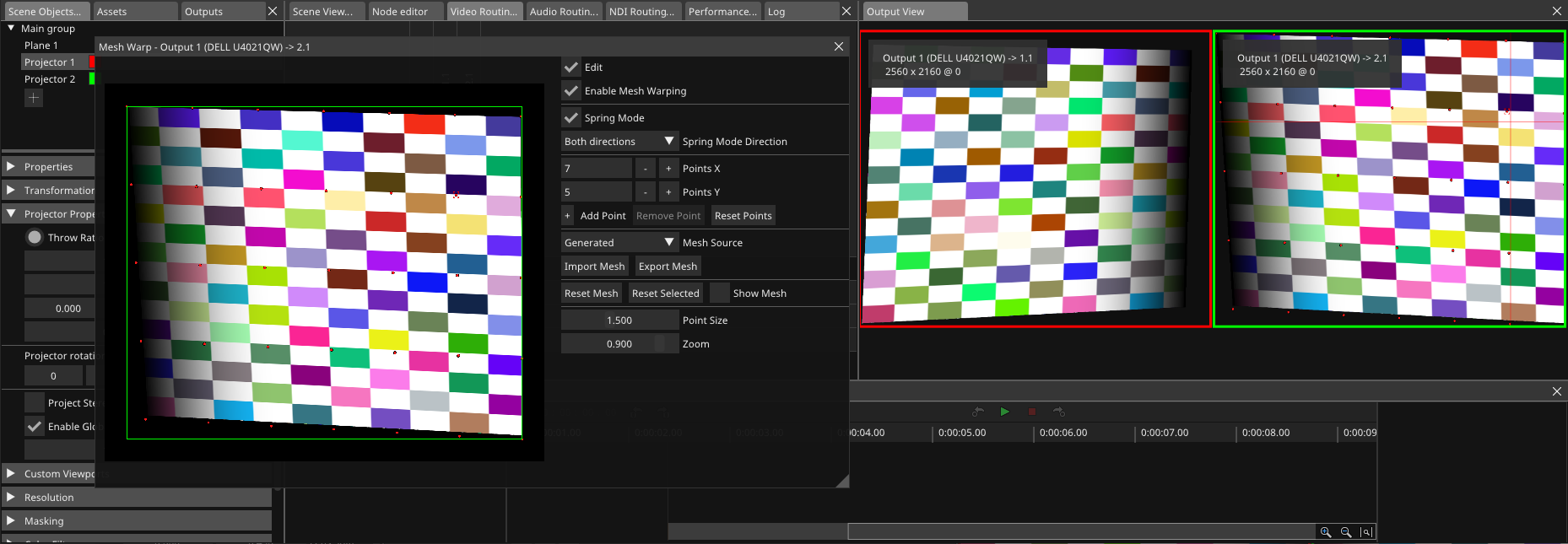

Apply Warping

When not working with our camera based auto calibration software, you can apply an output warping manually.

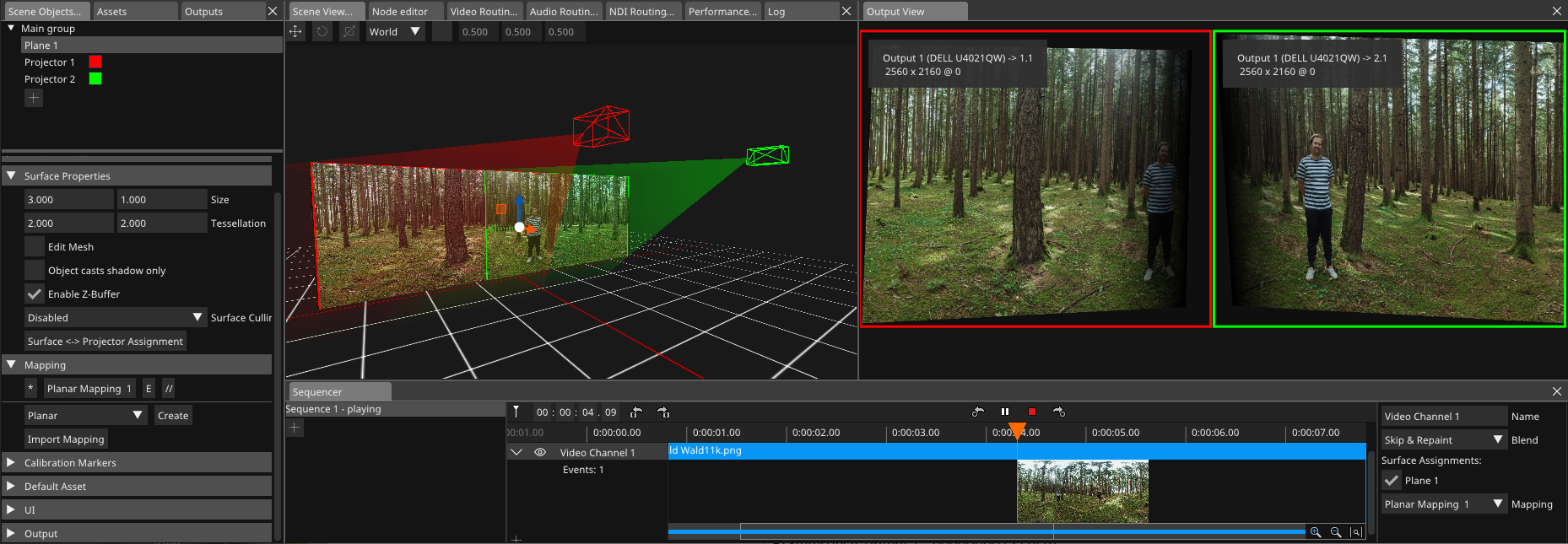

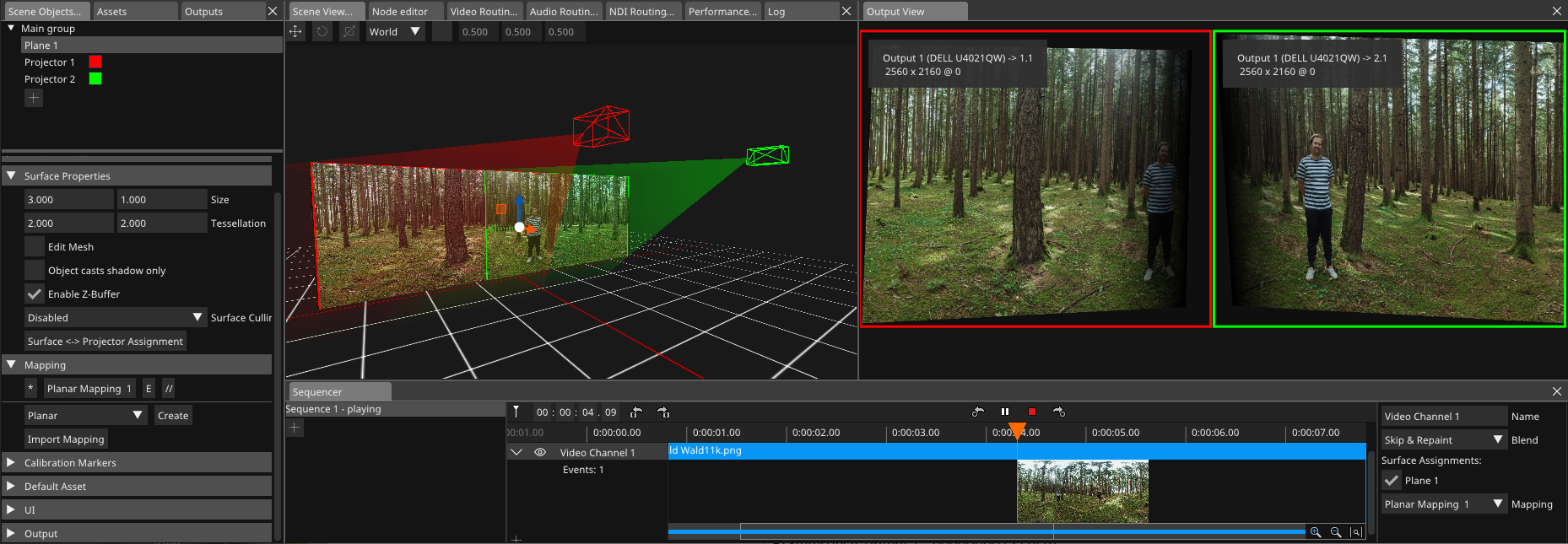

Load Content and put on Screen

Now load your media through the asset manager and put it into the sequencer by drag and drop. A video channel in a sequencer can be routed to one or multiple screens and mappings in the 3D scene.

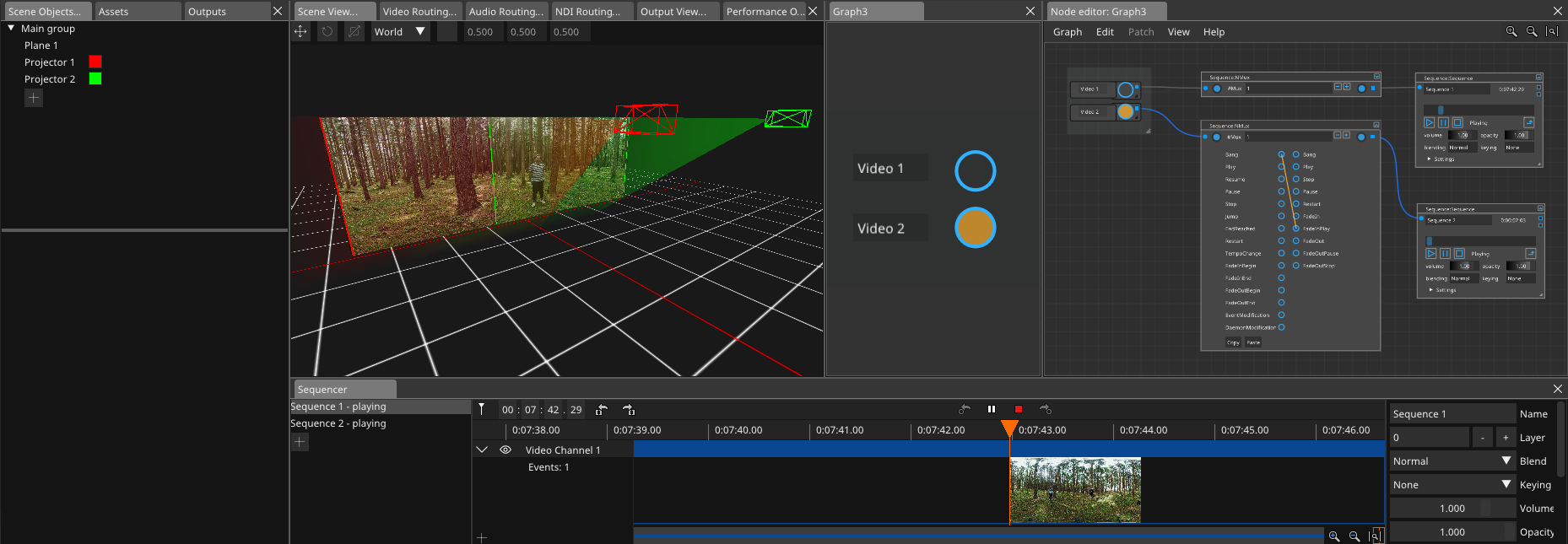

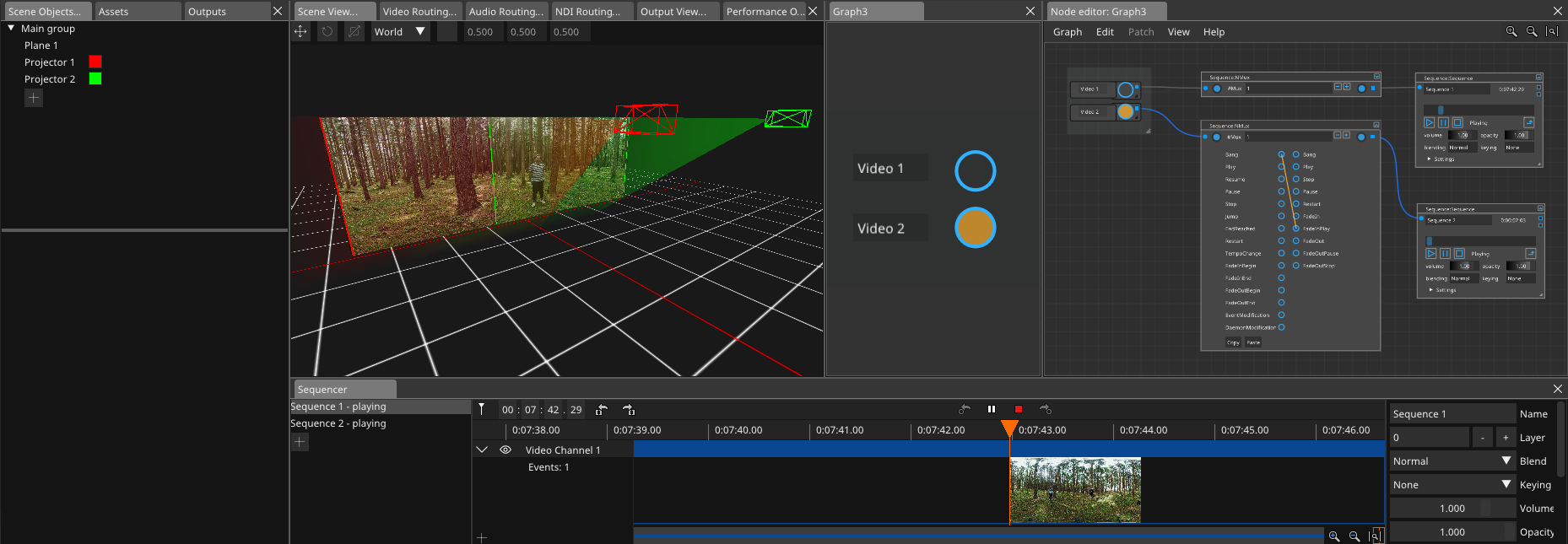

Automate Show Logic in Node Editor

Specify triggers of external events or free to define GUI elements, in the below case a sequencer starts to play with a fade in, if a button is pressed.

Technical Specifications

Supported media types

- Uncompressed Video (DPX, TGA, TGA Alpha, TIFF, SBSM)

- Compressed Video (Notch LC, HAP, HAP Alpha, HAP Q, HAP Q Alpha, HAP R, H.264, H.265, H.266, AV1, VP9, MPEG2)

- Support for common encryption (CENC)

- Still images (JPG, PNG, TGA, SPX, TIFF, BMP)

- Audio (WAV, AIFF, AAC, MPEG3, OGG)

- Notch Blocks, including timeline based adjustments of proposed parameters

- Webpages (HTML5)

- NDI (HQ, HX)

- Texture (SPOUT, Unity through plugin)

- Capture Input (through dedicated hardware)

- Support for realtime tempo adjustment and reverse playback for uncompressed video and audio

Color space

- Individually adjustable for each media and each output

- Supported Primaries: BT.601, BT.709, BT.2020, DCI-P3, Adobe RGB, Custom (RGBW)

- Supported Transfer: Gamma, sRGB, ST.2084, HLG, Log, Linear

- Handling: Passthrough, if media and output color primaries and transfer are identical, otherwise converted

Outputs

- Support for different output refresh rates through render groups. Support to adjust GPU output refresh rate dependent on media framerate. Framerate Conversion through frame-blending if needed.

- Warping, Blending, Brightness Correction

- Black Level Correction (with Optional Calibrator)

- GPU (straight, rectangular splits or custom pixel mapped)

- NDI (HQ)

- DMX (Artnet)

- Shared Memory, Shared Texture

- File Recording (DPX, SBSM, H.264, AV1)

- Audio Mixer (Matrix, Ambisonic)

- Audio (ASIO)

Supported stereoscopic media workflows

- Any supported media type can be placed into a stereo container with left and right eye media (total 2 channel or 4 channel) and used in the sequencer like any other media

- Active 3D capture through DP 1.2 capture card

- Supported output formats (on GPU outputs): Passive 3D, Active 3D, Whiteline code, Line-interleaved, Column-interleaved, Side-by-side, Top-Bottom

Playback synchronisation

- Within server network sync is created through PTP version 2 (self generated or external)

- LTC timecode input and output through audio or dedicated hardware.

Pixel processing functions

- Combine multiple media to single frame (render cluster capture)

- Key (Color, Chroma)

- Blend (Add, Subtract, Reverse Subtract, Min, Max, Multiply, Screen, Overlay, Luminosity, Colorburn, Colordodge, Contrast, Darken, Difference and many more)

Show Logic

- Node based visual programming interface

- Access to many internal components (assets, sequencer, surfaces, mappings, mixer, outputs, etc.)

- Automate show progress and interface to 3rd parties (UDP, TCP, ArtNet, sACN)

- Generative nodes for subtitles, timecode, text

- Mathematical and logical functions

- Customized function blocks and UI creation

Performance Monitoring and Logging

- Track system performance and log important events for analysis and optimization

Data Maintenance

- Monitoring hard drive performance and addressing the effects of data aging on solid-state drives to ensure smooth operation

Headless Operation – User Interface

- User interfaces for users and operators are streamed over network to dedicated client software (MAC, PC

- Multiple users can work on the server at the same time

- User interfaces for operators can be created in node based visual programming interface

Movie Licensing System

- Movie and license management through internet for encrypted movies (CENC)

Annotation Tool

- Freeze media and multiuser annotate from tabled

- Save screenshots and annotations to pdf

- Requires Windows tablet for running our annotation software

There is much more to tell..

.. contact us with your project requirements, we are happy to assist planning and realizing it with our servers.